If you’re an experienced SEO specialist looking to take your technical SEO skills to the next level, you’re in the right place. In today’s highly competitive online landscape, basic technical SEO techniques are no longer enough to achieve top rankings in search engine results pages. We’ve created this comprehensive guide to help you go beyond the basics and master advanced technical SEO techniques. From optimizing website architecture to improving website speed and security, we’ll cover everything you need to know to give your website a competitive edge and stay ahead of the game. Before delving into the blog, take a moment to consider the topics you’ll be mastering –

So, get ready to take your technical SEO skills to the next level with our guide to advanced techniques for experienced SEO specialists.

Rapid Google Indexing: Tips to Ensure Your Website is Found Quickly

To improve your website’s visibility and SEO rankings, you must ensure that Google indexes your website quickly. To achieve this, you need to follow a few critical steps.

Understanding Crawling, Indexing, and Ranking

Crawling, indexing, and ranking are the three essential steps that Google uses to discover websites and display them in search results. Crawling involves Google searching and finding your page, while indexing is when Google assimilates your page into its categorized database index. The ranking is the final step in which Google shows your page in its search results.

Why Definitions Are Important

It’s crucial to understand the definitions of these terms, as using them interchangeably can confuse them. As an SEO professional, you should be able to communicate the process to clients and stakeholders effectively.

Creating Valuable and Unique Pages

Google values pages that are unique and valuable to its users. Therefore, ensure that your pages are helpful and unique by reviewing the content and identifying issues that need improvement. If you need more clarification, analyze pages of low quality and little organic traffic.

Having a Regular Plan for Updating Content

Websites within search results are continually changing, so you must stay updated and regularly change your content. Have a monthly or quarterly review of your website and track any changes your competitors make.

Removing Low-Quality Pages and Optimizing Content

Over time, pages may perform differently than expected and can hold your website back in terms of content. It’s essential to remove low-quality pages and prioritize which pages to remove based on relevance and contribution to the topic.

Ensuring That Robots.txt does Not block Pages

Check to see if Google is crawling or indexing any pages on your website. If not, you may have accidentally blocked crawling in the robots.txt file. Check the file and make sure there are no lines that block crawlers.

Checking for Rogue Noindex Tags

Rogue no index tags can be detrimental to your website’s indexing. Ensure that your website has no rogue no index tags, as they can cause significant issues in the future.

Including All Pages in Your Sitemap

Make sure that all pages on your website are included in the sitemap. This can be challenging for larger websites, so ensure proper oversight and have all pages in the sitemap.

Removing Rogue Canonical Tags

Rogue canonical tags can prevent your site from getting indexed. Finding and fixing the error is the first step to correcting this issue. Make sure to implement a plan to correct these pages in volume, depending on the size of your site.

As a website owner, one of your primary concerns is ensuring that Google is properly indexing your website’s pages. Here are some things you can do to improve your website’s indexing processes:

Make Sure That the Non-Indexed Page Is Not Orphaned

An orphan page is a page that appears neither in the sitemap, in internal links, or in the navigation – and isn’t discoverable by Google through any of the above methods. If you identify an orphaned page, you need to un-orphan it. You can do this by including your page in the following places:

- Your XML sitemap.

- Your top menu navigation.

- Ensuring it has plenty of internal links from important pages on your site.

Doing this gives you a greater chance of ensuring that Google will crawl and index that orphaned page, including it in the overall ranking calculation.

Repair All Nofollow Internal Links

Believe it or not, nofollow means Google won’t follow or index that particular link. If you have many of them, you inhibit Google’s indexing of your site’s pages. There are very few situations where you should nofollow an internal link. Adding nofollow to your internal links is something you should do only if necessary.

Because of these no-follows, you are telling Google not to trust these particular links. More clues as to why these links are not quality internal links come from how Google treats nofollow links.

If you are including nofollows on your links, then it would probably be best to remove them. And because blog comments tend to generate a lot of automated spam, this is the perfect time to flag these nofollow links properly on your site.

Make Sure That You Add Powerful Internal Links

A run-of-the-mill internal link is just an internal link. Adding many of them may – or may not – do much for your rankings of the target page. But what if you add links from pages with backlinks that are passing value? Even better! What if you add links from more powerful pages that are already valuable? That is how you want to add internal links.

Before randomly adding internal links, you want to make sure that they are powerful and have enough value that they can help the target pages compete in the search engine results.

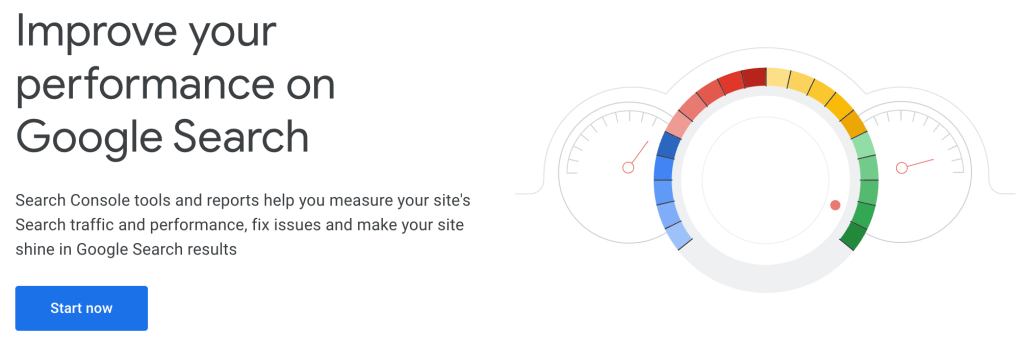

Submit Your Page to Google Search Console

If you’re still having trouble with Google indexing your page, consider submitting your site to Google Search Console immediately after you hit the publish button. Doing this will tell Google about your page quickly and help you get your page noticed by Google faster than other methods.

In addition, this usually results in indexing within a couple of days if your page is not suffering from quality issues.

Use the Rank Math Instant Indexing Plugin

Use the Rank Math instant indexing plugin to rapidly get your post indexed. Using the instant indexing plugin means your site’s pages typically get crawled and indexed quickly.

The plugin allows you to inform Google to add the page you just published to a prioritized crawl queue. Rank Math’s instant indexing plugin uses Google’s Instant Indexing API. It improves your site’s quality, and its indexing processes mean it will be optimized to rank faster in less time.

Boosting Search Indexation: Effective SEO Strategies and Tricks

Search Engine Optimization (SEO) is a critical part of digital marketing that can help boost your website’s visibility and drive more traffic by improving its ranking in search engine results. One of the essential aspects of SEO is ensuring that search engines correctly index your website. Here are some tips and tricks to help you improve your website’s search indexation:

Monitor crawl status regularly using Google Search Console:

Regularly checking your crawl status can help identify any potential errors. You can use the URL Inspection tool to quickly diagnose and resolve crawl errors to ensure that all target pages are crawled and indexed.

Create mobile-friendly webpages with optimized resources and interlinking:

Making your website mobile-friendly by implementing responsive web design, using the viewpoint meta tag in content, minifying on-page resources, and tagging pages with the AMP cache can enhance your website’s search indexation. Consistent information architecture and deep linking to isolated web pages can also help.

Update content frequently to signal site improvement to search engines:

Creating new content regularly indicates to search engines that your site is continually improving. This tip is handy for publishers who need new stories published and indexed frequently.

Submit a sitemap to each search engine and optimize it for better indexation:

Submitting a sitemap to Google Search Console and Bing Webmaster Tools, and creating an XML version using a sitemap generator or manually creating one in Google Search Console can help search engines crawl your website more efficiently.

Optimize interlinking scheme to establish a consistent information architecture:

Establishing a consistent information architecture and creating main service categories where related web pages can sit can improve search indexation.

Minify on-page resources and increase load times for faster crawling:

Optimizing your webpage for speed, minifying on-page resources such as CSS, enabling caching and compression to help spiders crawl your site faster can improve search indexation.

Fix pages with noindex tags to prevent them from being excluded from search results:

Identifying webpages with noindex tags preventing them from being crawled and using tools like Screaming Frog and the Yoast plugin for WordPress to switch a page from index to noindex can help resolve this issue.

Set a custom crawl rate to avoid negative impacts on site performance:

Slowing or customizing the speed of your crawl rates can help avoid negative impacts on site performance, giving your website time to make necessary changes if going through a significant redesign or migration.

Eliminate duplicate content through canonical tags and optimized meta tags:

Blocking pages from being indexed or placing a canonical tag on the page you wish to be indexed, and optimizing meta tags of each page to prevent search engines from mistaking similar pages as duplicate content in their crawl can improve your website’s search indexation.

Block pages that you don’t want spiders to crawl to improve crawl efficiency:

Placing a noindex tag, URL in a robots.txt file or deleting the page altogether can help improve crawl efficiency.

Deep link to isolated web pages for better indexation and visibility:

Deep linking to isolated webpages by acquiring a link on an external domain can get them indexed, leading to better visibility and improved search indexation.

Improving your website’s indexability and crawl budget is crucial to ensuring that it is correctly indexed by search engines, which can improve your rankings and drive more traffic to your site. It’s crucial to stay up-to-date with potential crawl errors and other indexation issues, so regularly scan your website in Google Search Console to identify any problems and fix them quickly. With these 11 tips and tricks, you can improve your website’s search indexation and enhance its overall visibility on the web.

The Importance of Sitemaps: Understanding What They Are and Why You Need Them

Sitemaps are an essential aspect of search engine optimization (SEO), yet many website owners and managers remain uncertain about their purpose and function. In this article, we’ll take a closer look at sitemaps and answer some of the most common questions, including:

- What is a sitemap?

- Do I need one?

- How do I create one?

By the end of this article, you’ll better understand sitemaps and how they can benefit your website.

What is a Sitemap?

A sitemap is a file that lists all the pages on your website and provides additional information about each page. Two main types of sitemaps apply to SEO:

XML sitemaps –

This type of sitemap is designed for search engines and provides a roadmap for Google and other bots to crawl your site. An XML sitemap includes essential pages, videos, and other important files. It can also provide details about when a page was last updated and whether the content is available in other languages.

HTML sitemaps –

This type of sitemap is designed for users and provides a navigational directory of all the pages on your site. An HTML sitemap helps users find what they need quickly and easily.

Do I Need a Sitemap?

Whether or not you need a sitemap depends on a few factors, such as the size and structure of your website. Here are some questions to consider:

- How big is your site? An XML sitemap is recommended if it’s large enough that Google might miss newly updated content during crawling.

- Is your site new or lacking external links? If so, an XML sitemap can help Google discover your site more quickly.

- Do you have a lot of content, such as photos, videos, and news articles? An XML sitemap can include details about these types of content.

- Is your site poorly structured or do you have archived and orphan pages? An XML sitemap can help ensure these pages are indexed.

- HTML sitemaps are not necessary for SEO but can benefit users, especially for sites with a large number of pages.

How to Create a Sitemap

Creating a sitemap can seem overwhelming, but there are tools available to help. Here are some best practices for creating an XML sitemap:

- Include only URLs you want to be indexed.

- Do not use session IDs.

- Include media assets such as videos, photos, and news items.

- Use hreflang to show alternative language versions of your website.

- Regularly update your sitemap to ensure it’s accurate.

- Tools for Creating Sitemaps

Here are some popular tools for creating sitemaps:

- Screaming Frog – A great option for generating an XML sitemap after crawling your URLs.

- XML-Sitemaps.com – A web-based application that generates an XML file from your website URL.

- Yoast SEO, All in One SEO, and Google XML Sitemaps – WordPress plugins for creating XML sitemaps.

- Simple Sitemap, All in One SEO, and Companion Sitemap Generator – WordPress plugins for creating HTML sitemaps.

Sitemaps are an essential tool for improving the discoverability of your website. By creating and maintaining a sitemap, you can help search engines crawl and index your site more effectively, while also making it easier for users to find what they’re looking for. Use the best practices and tools outlined in this article to create an XML and/or HTML sitemap for your website.

Technical SEO: Understanding Website Redirects and Their Impact

Redirects are important in how websites are crawled and indexed by search engines like Google. In this article, we’ll look closer at the different types of redirects and how they can impact your site’s SEO.

Exploring the Types of Redirects

Website redirects are often thought of as simple detours to another URL, but there’s more to them than that. There are four main types of redirects: meta refresh redirects, Javascript redirects, and HTTP redirects.

1. Meta Refresh Redirects and Their Limitations

Meta refresh redirects are set at the page level and are generally not recommended for SEO purposes. There are two types of meta refresh: delayed, which is seen as a temporary redirect, and instant, which is seen as a permanent redirect.

2. Javascript Redirects and Their Impact on SEO

Javascript redirects are also set on the client side’s page and can cause SEO issues. Google has stated a preference for HTTP server-side redirects.

3. HTTP Redirects and Their Advantages for SEO

HTTP redirects are set server-side and are the best approach for SEO purposes. We’ll cover them in more detail later in this article.

4. HTTP Response Status Codes

Before we dive into HTTP redirects, let’s first understand how user agents and web servers communicate. When a user agent like Googlebot tries to access a webpage, it requests the website server, which issues a response in the form of an HTTP response status code.

Understanding the Meaning of HTTP Response Status Codes

HTTP response status codes provide a status for the request for a URL. For example, if the request for a URL is successful, the server will provide a response code of 200, which means the request for a URL was successful.

Common Types of HTTP Redirects and Their Use Cases

Now that we understand HTTP response status codes, let’s look at the different types of HTTP redirects and when to use them.

1. Permanent Redirects: 301 and Its Implications for SEO

The 301 status code, also known as a “moved permanently” redirect, indicates to a user agent that the URL has been permanently moved to another location. This is important for SEO purposes because search engines will forward any authority or ranking signals from links pointing to the old URL to the new URL.

2. Temporary Redirects: 302 and 307 and When to Use Them

The 302 and 307 status codes are used for temporary redirects. The 302 status code suggests that the URL is temporarily at a different URL, and it is suggested to use the old URL for future requests. The 307 status code means the requested URL is temporarily moved, and the user agent should use the original URL for future requests.

Implementing Redirects for SEO

Now that we understand the different types of redirects and when to use them, let’s look at some best practices for implementing redirects for SEO.

Best Practices for Redirecting Single URLs

When redirecting a single URL, using the correct syntax and regular expressions is important to ensure that only the intended URL is redirected.

Redirecting Multiple URLs with Regular Expressions

When redirecting multiple URLs with regular expressions, testing your logic is important to avoid unexpected results. Use caution when using RegEX for group redirects.

Handling Directory Changes and Word Removals in URLs

When handling directory changes or word removals in URLs, it’s important to use the correct syntax and regular expressions to ensure that only the intended URLs are redirected.

Additional Considerations for Redirecting Websites

There are a few additional considerations regarding redirecting websites for SEO purposes.

Canonical URLs and Their Importance in SEO

Canonical URLs are important for avoiding duplicate content issues and consolidating ranking signals from multiple URLs.

Redirects are a critical component of website optimization and search engine optimization (SEO). Regarding website restructuring, migrating to HTTPS, or changing domains, redirects become even more critical. This article will discuss some common redirect scenarios and best practices for implementing them.

Directory Change

If you need to move everything from an old directory to a new one, you can use the following rewrite rule:

RewriteRule ^old-directory$ /new-directory/ [R=301,NC,L]

RewriteRule ^old-directory/(.*)$ /new-directory/$1 [R=301,NC,L]

This rule tells the server to remember everything in the URL that follows /old-directory/ and pass it on to the destination. By using two rules (one with a trailing slash and one without), you can avoid adding an extra slash at the end of the URL when a requested URL with no trailing slash has a query string.

Remove a Word from URL

If you want to remove a word (e.g., “Chicago”) from 100 URLs on your website, you can use the following rewrite rule:

RewriteRule ^(.)-chicago-(.) http://%{SERVER_NAME}/$1-$2 [NC,R=301,L]

This rule will remove “chicago” from URLs that have it in the form of example-chicago-event.

If the URL is in the form of example/chicago/event, you can use the following rule:

RewriteRule ^(.)/chicago/(.) http://%{SERVER_NAME}/$1/$2 [NC,R=301,L]

Set a Canonical URL

Canonical URLs are crucial for SEO, as search engines treat URLs with “www” and “non-www” versions as different pages with the same content. To ensure that your website runs only with one version, use the following rule:

For a “www” version:

RewriteCond %{HTTP_HOST} ^yourwebsite.com [NC]

RewriteRule ^(.*)$ http://www.yourwebsite.com/$1 [L,R=301]

For a “non-www” version:

RewriteCond %{HTTP_HOST} ^www.yourwebsite.com [NC]

RewriteRule ^(.*)$ http://yourwebsite.com/$1 [L,R=301]

To include trailing slashes as part of canonicalization, use the following rule:

RewriteCond %{REQUEST_FILENAME} !-f

RewriteRule ^(.*[^/])$ /$1/ [L,R=301]

This will redirect /example-page to /example-page/.

HTTP to HTTPS Redirect

To force HTTPS on every website, use the following rule:

RewriteCond %{HTTP_HOST} ^yourwebsite.com [NC,OR]

RewriteCond %{HTTP_HOST} ^www.yourwebsite.com [NC]

RewriteRule ^(.*)$ https://www.yourwebsite.com/$1 [L,R=301,NC]

This rule combines a www or non-www version redirect into one HTTPS redirect rule.

Redirect from Old Domain to New

To redirect an old domain to a new one, use the following rule:

RewriteCond %{HTTP_HOST} ^old-domain.com$ [OR]

RewriteCond %{HTTP_HOST} ^www.old-domain.com$

RewriteRule (.*)$ http://www.new-domain.com/$1 [R=301,L]

This rule uses two cases: one with the “www” version of URLs and another “non-www” because any page for historical reasons may have incoming links to both versions.

Redirect Best Practices

Don’t redirect all 404 broken URLs to the homepage. Instead, create beautiful 404 pages that engage users and help them find what they were looking for. Redirected page content should be equivalent to the old page

Understanding redirects and their appropriate status codes is crucial for optimizing web pages and improving SEO. Various situations, such as migrating a website to a new domain or temporarily holding a webpage under a different URL, require precise knowledge of redirects. While plugins offer convenient options for redirect management, they should only be used with a proper understanding of when and why to use specific types of redirects.

The Fundamentals of Technical SEO: Understanding Website Indexing for Search Engines

Website indexing is a pivotal initial step, following crawling, in the intricate process of comprehending the essence of webpages to enable their ranking and display as search results by search engines.

Search engines relentlessly enhance their methods of website crawling and indexing. Thus, it is imperative to comprehend how Google and Bing undertake the task of crawling and indexing websites for technical SEO purposes and devising efficacious strategies to boost search visibility.

Importance of website indexing:

Website indexing is a crucial step in how web pages are ranked and served as search engine results. Search engines use indexing to understand what webpages are about, making it essential to stay updated on how Google and Bing approach crawling and indexing websites.

Refinement of search engines:

Search engines are constantly improving how they crawl and index websites, making it necessary to understand their operations for successful indexing.

Methods for indexing new pages:

If you create a new page on your site, there are several ways it can be indexed. The simplest way is to let Google discover it by following links. However, if you want Googlebot to get to your page faster, there are additional methods you can use such as XML sitemaps or requesting indexing with Google Search Console.

XML sitemaps:

XML sitemaps are a reliable way to call a search engine’s attention to content. This method provides search engines with a list of all the pages on your site, along with additional details such as the last modified date.

IndexNow protocol:

Bing has an open protocol called IndexNow, based on a push method of alerting search engines of new or updated content. This protocol is faster than XML sitemaps and uses fewer resources.

Bing Webmaster Tools:

Bing Webmaster Tools is another method to consider for getting your content indexed. It offers substantial information that can help assess problem areas and improve your rankings on Bing, Google, and elsewhere.

Crawl budget:

Crawl budget is a term used to describe the number of resources that Google will use to crawl a website. Understanding the crawl budget and how it’s determined is essential for successful indexing.

Optimizing websites for search engines starts with good content and ends with sending it off to get indexed. Understanding how search indexing works is essential for creating effective strategies for improving search visibility.

X-Robots-Tag HTTP Header: Controlling Crawling and Indexing for Improved SEO

The X-Robots-Tag is a powerful tool for search engine optimization (SEO). This HTTP header response allows webmasters to instruct search engine bots on which pages and content to crawl and index on their site. In this article, we will discuss the X-Robots-Tag and how to use it to control how your website is crawled and indexed.

Controlling Crawling and Indexing

Search engine spiders are crucial for driving traffic to a website, but not all pages need to be crawled or indexed. For example, privacy policies or internal search pages may not be useful for driving traffic and could even divert traffic from more important pages. To avoid this, webmasters can use robots.txt or meta robot tags to control how search engine bots crawl and index their websites.

X-Robots-Tag: An Alternative to Meta Robots Tag

The X-Robots-Tag is another way to control how search engine spiders crawled and indexed webpages. This HTTP header response controls indexing for an entire page and specific elements. While meta robot tags are common, the X-Robots-Tag is more flexible and can be used to control non-HTML files and serve directives site-wide instead of on a page level.

When to Use the X-Robots-Tag

The X-Robots-Tag is useful in situations where you want to control how non-HTML files are crawled and indexed or when you want to serve directives site-wide. For example, if you want to block a specific image or video from being crawled, the X-Robots-Tag makes it easy. You can use regular expressions to execute crawl directives on non-HTML files and apply parameters on a larger, global level.

Where to Put the X-Robots-Tag

You can add the X-Robots-Tag to an Apache configuration or a .htaccess file to block specific file types. In Apache servers, the X-Robots-Tag can be added to an HTTP response header in a .htaccess file.

Real-World Examples and Uses of the X-Robots-Tag

Let’s look at some real-world examples of how to use the X-Robots-Tag. To block search engines from indexing .pdf file types, you can use the following configuration in Apache servers:

<Files ~ “.pdf$”>

Header set X-Robots-Tag “noindex, nofollow”

</Files>

In Nginx, the configuration would look like this:

location ~* .pdf$ {

add_header X-Robots-Tag “noindex, nofollow”;

}

Checking for an X-Robots-Tag

You can check for an X-Robots-Tag using a browser extension that provides information about the URL. The Web Developer plugin lets you see the various HTTP headers used. You can also use Screaming Frog to identify sections of the site using the X-Robots-Tag and which specific directives are being used.

The X-Robots-Tag is a powerful tool for controlling how search engine bots crawl and index your website. It is more flexible than meta robot tags and can be used to control non-HTML files and serve directives site-wide. By using regular expressions, you can execute crawl directives on non-HTML files and apply parameters on a larger, global level. Just be aware of the dangers of deindexing your entire site by mistake and take the time to check your work.

Essential Technical SEO Tools for Agencies: Optimizing Your Website for Success

As an agency, your technical SEO strategy can make or break your client’s online presence. With so many tools available, it’s important to have the right ones in your arsenal. This blog post will discuss 20 essential technical SEO tools for agencies.

1. Google Search Console

Google Search Console (GSC) is the primary tool for any SEO professional. It provides a wealth of data, features, and reports that can help identify website search performance issues.

Here are some key points to keep in mind when using Google Search Console:

- GSC has recently been overhauled, replacing many old features and adding more data, features, and reports.

- Setting up a reporting process is essential for agencies that do SEO, which can save you when there are issues with website change-overs or GSC accounts being wiped out.

2. Semrush

When it comes to accurate data for keyword research and technical analysis, Semrush is a go-to tool for many SEO professionals. However, it also provides valuable competitor analysis data that can give you an edge over your rivals.

- Competitor analysis with Semrush can reveal surprising insights that help you better tailor your SEO strategy.

- By going deep into a competitor’s rankings and market analysis data, you can uncover patterns that can inform your approach to keyword research and link building.

- While it’s not always thought of as a technical SEO tool, Semrush’s competitor analysis data is an essential component of modern SEO.

3. Google PageSpeed Insights

Google PageSpeed Insights is an excellent tool for agencies that want to get their website page speed in check. The tool uses a combination of speed metrics for both desktop and mobile, providing a comprehensive analysis of a website’s page speed. Although PageSpeed Insights should not be used as the only metric for page speed testing, it is an excellent starting point.

Here are some key points to keep in mind when using Google PageSpeed Insights:

- PageSpeed Insights uses approximations, so you may get different results with other tools.

- Google PageSpeed provides only part of the picture, and you need more complete data for effective analysis. Therefore, it’s best to use multiple tools for your analysis to get a complete picture of your website’s performance.

4. Screaming Frog

Screaming Frog is a web crawler that is essential for any substantial website audit. It can help you identify the basics, such as missing page titles, meta descriptions, and meta keywords, and errors in URLs and canonicals. It can also take a deep dive into a website’s architecture and identify issues with pagination and international SEO implementation.

Here are some key points to keep in mind when using Screaming Frog:

- Be mindful of specific settings that can introduce false positives or errors into the audit.

- Screaming Frog is a powerful tool that can help identify various technical issues that may affect a website’s performance.

5. Google Analytics

Google Analytics provides valuable data that can help identify penalties, issues with traffic, and other website-related issues. It’s essential to have a monthly reporting process in place to save data for unexpected situations that may arise with a client’s Google Analytics access.

Here are some key points to keep in mind when using Google Analytics:

- It’s important to set up Google Analytics correctly to get the most out of its features.

- A monthly reporting process can help you save data and prevent situations where you may lose all data for your clients.

6. Google Mobile-Friendly Testing Tool

In today’s mobile-first world, determining a website’s mobile technical aspects is critical for any website audit. The Google Mobile-Friendly Testing Tool is a great way to test a website’s mobile implementation.

Here are some key points to keep in mind when using Google Mobile-Friendly Testing Tool:

- The tool provides insights into a website’s mobile implementation, allowing agencies to improve the mobile user experience.

- The tool helps agencies identify and fix mobile technical issues before they negatively impact the website’s performance.

7. Web Developer Toolbar

The Web Developer Toolbar is a Google Chrome extension that helps identify issues with code, specifically JavaScript implementations with menus and the user interface. It can help you identify broken images, view meta tag information, and response headers.

Here are some key points to keep in mind when using the Web Developer Toolbar:

- This extension is useful for identifying JavaScript and CSS issues.

- You can turn off JavaScript and CSS to identify where these issues occur in the browser.

8. WebPageTest

WebPageTest is an essential tool for auditing website page speed. It can help you identify how long a site is to load fully, the time to the first byte, start render time, and document object model (DOM) elements. It’s useful for determining how a site’s technical elements interact to create the final result or display time.

Here are some key points to keep in mind when using WebPageTest:

- This tool is useful for conducting waterfall speed tests and competitor speed tests.

- You can also identify competitor speed videos to compare with your own website’s performance.

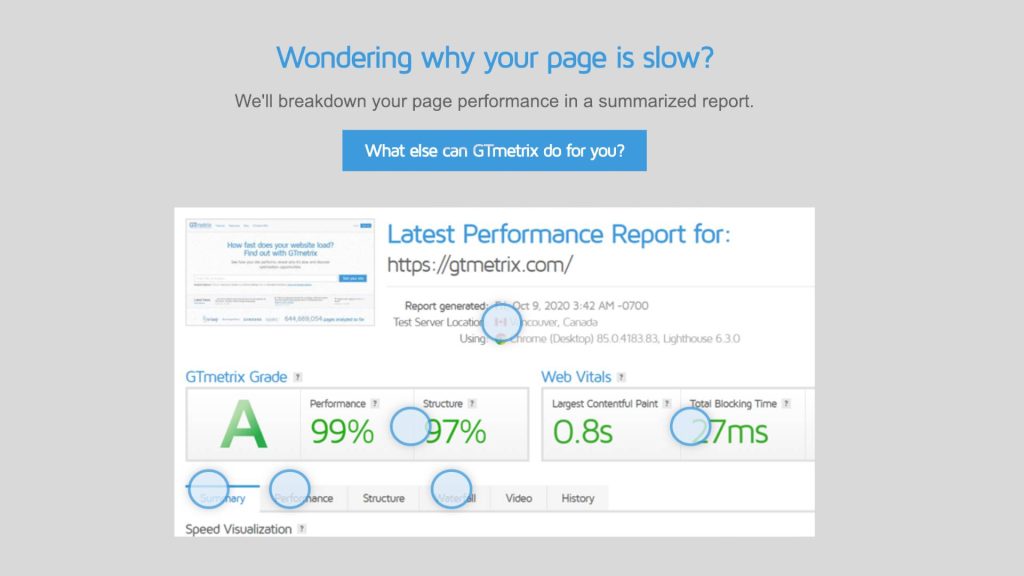

9. GTmetrix Page Speed Report

GTmetrix is a page speed report card that provides a different perspective on page speed. By diving deep into page requests, CSS, JavaScript files that need to load, and other website elements, it is possible to clean up many elements contributing to high page speed.

Here are some key points to keep in mind when using GTmetrix Page Speed Report:

- The tool provides a comprehensive report on the page speed performance.

- The tool helps agencies identify website elements that contribute to high page speed.

10. Barracuda Panguin

If you are investigating a site for a penalty, the Barracuda Panguin tool is a must-have in your workflow. This tool connects to the Google Analytics account of the site you are investigating and overlays data of when a penalty occurred with your GA data. Using this overlay, it is possible to identify situations where potential penalties occur easily.

Here are some key benefits of using Barracuda Panguin for your technical SEO analysis:

- Easily identify potential penalties: The overlay feature allows you to see when a penalty occurred in relation to your GA data, helping you pinpoint potential issues that may be impacting your site’s search engine visibility.

- Zero in on data approximations: While there isn’t an exact science to identifying penalties, using tools like Barracuda Panguin can help you identify approximations in data events as they occur, which can be valuable for investigative purposes.

- Investigate all avenues: It’s important to investigate all avenues of where data is potentially showing something happening in order to rule out any potential penalty. Barracuda Panguin can help you do just that.

11. Google’s Rich Results Testing Tool

The Google Structured Data Testing Tool has been deprecated and replaced by the Google Rich Results Testing Tool. This tool helps you test Schema structured data markup against the known data from Schema.org that Google supports.

Here are some key points to keep in mind when using Google’s Rich Results Testing Tool:

- The tool performs one function, which is to test Schema structured data markup against the known data from Schema.org that Google supports.

- The tool helps agencies identify issues with their Schema coding before the code is implemented.

12. W3C Validator

Although not specifically designed as an SEO tool, the W3C Validator is still an essential tool for website auditing. It helps identify technical issues with a website’s code and improve its overall performance.

Here are some key points to keep in mind when using W3C Validator:

- The tool helps identify technical issues with a website’s code and improve its overall performance.

- If you don’t know what you’re doing, misinterpreting the results can make things worse. Therefore, it’s best to have an experienced developer interpret the results.

13. Ahrefs

Another popular tool for technical SEO is Ahrefs. It’s particularly useful for link analysis, allowing you to identify patterns in a site’s link profile and understand its linking strategy.

- Ahrefs can help you identify anchor text issues that may be impacting a site.

- The tool allows you to identify the types of links linking back to a site, such as blog networks, high-risk link profiles, and forum/Web 2.0 links.

- With Ahrefs, you can also pinpoint when a site’s backlinks started to go missing and track its linking patterns over time.

14. Majestic

Majestic is a long-standing tool in the SEO industry, providing unique insights into a site’s linking profile.

- Like Ahrefs, Majestic allows you to identify linking patterns by downloading reports of a site’s full link profile.

- With the tool, you can find bad neighborhoods and other domains owned by a website owner, which can help diagnose issues with a site’s linking.

- Majestic has its own values for calculating technical link attributes, such as Trust Flow and Citation Flow, and its own link graphs to identify any issues occurring with the link profile over time.

15. Moz Bar

While it may seem whimsical at first glance, Moz Bar is actually a powerful technical SEO tool that provides valuable metrics for analysis.

- Moz Bar can help you see a site’s Domain Authority and Page Authority, Google Caching status, and other important information.

- Without diving deep into a crawl, you can also view advanced elements like rel= “canonical” tags, page load time, Schema Markup, and the page’s HTTP status.

- Moz Bar is particularly useful for an initial site survey before diving deeper into a full SEO audit.

16. Google Search Console XML Sitemap Report

Diagnosing sitemap issues is a critical part of any SEO audit. The Google Search Console XML Sitemap Report is essential for achieving the 1:1 ratio of URLs added to the site and the sitemap being updated. Here’s what you need to know about this tool:

- Ensure a complete sitemap: The sitemap should contain all 200 OK URLs, and no 4xx or 5xx URLs should appear. The Google Search Console XML Sitemap Report can help ensure your sitemap is complete and error-free.

- Achieve a 1:1 ratio of URLs: The sitemap should have a 1:1 ratio of exact URLs as there are on the site. This means that the sitemap should not have any orphaned pages not showing up in the Screaming Frog crawl.

- Remove parameter-laden URLs: If they are not considered primary pages, they should be removed from the sitemap. Certain parameters can cause issues with XML sitemaps validating, so it’s important to ensure that they are not included in URLs.

17. BrightLocal

If you’re looking for a tool to help you with your local SEO profile, BrightLocal is an excellent choice. Although it’s not primarily thought of as a technical SEO tool, it can help you identify technical issues on your site. Here are some of the features that make BrightLocal a must-have tool for your local business:

- Audit your site’s local SEO citations

- Identify and submit your site to appropriate citations

- Use a pre-populated list of potential citations

- Audit, clean, and build citations to the most common citation sites

- In addition, BrightLocal includes in-depth auditing of your Google Business Profile presence, including local SEO audits.

18. Whitespark

Whitespark is a more in-depth tool compared to BrightLocal. It offers a local citation finder that helps you dive deeper into your site’s local SEO. Here’s what you can expect from Whitespark:

- Identify where your site is across the competitor space

- Identify all your competitor’s local SEO citations

- Track rankings through detailed reporting focused on distinct Google local positions

- Detailed organic rankings report from both Google and Bing

19. Botify

If you’re looking for a complete technical SEO tool, Botify is an excellent choice. It can help you reconcile search intent and technical SEO with its in-depth keyword analysis tool. Here are some of the features of Botify:

- Tie crawl budget and technical SEO elements to searcher intent

- Identify all the technical SEO factors that contribute to ranking

- Detect changes in how people are searching

- In-depth reports that tie data to actionable information

20. Excel

Excel may not be considered a technical SEO tool, but it has some “super tricks” that can help you perform technical SEO audits more efficiently. Here are some of the features of Excel that make it a valuable tool:

- VLOOKUP allows you to pull data from multiple sheets to populate the primary sheet

- Easy XML Sitemaps can be coded in Excel in a matter of minutes

- Conditional Formatting allows you to reconcile long lists of information in Excel

While SEO tools can help create efficiencies, they do not replace manual work. It’s essential to have a combination of both to get the best results. By using the right tools, you can provide another dimension to your analysis that standard analysis might not provide.

Google Lighthouse: What It Is and How It Works

Google Lighthouse is an open-source tool that measures website performance and provides actionable insights for improvement. Lighthouse uses a web browser called Chromium to build pages and runs tests on the pages as they’re built. Each audit falls into one of five categories: Performance, Accessibility, Best Practices, SEO, and Progressive Web App.

Lighthouse vs. Core Web Vitals:

Core Web Vitals is a set of three metrics introduced by Google in 2020 to measure web performance in a user-centric manner. These metrics are Largest Contentful Paint (LCP), Total Blocking Time (TBT), and Cumulative Layout Shift (CLS). While Core Web Vitals is a subset of Lighthouse performance scoring, Lighthouse uses emulated tests, while Core Web Vitals are calculated from page loads worldwide.

Why Lighthouse May Not Match Search Console/Crux Reports:

Lighthouse performance data doesn’t account for network connection, the device’s network processing power, and the user’s physical distance to the site’s servers. Instead, the tool emulates a mid-range device and throttles the CPU to simulate the average user. Lab tests are collected within a controlled environment with a predefined device and network settings. However, the good news is you don’t have to choose between Lighthouse and Core Web Vitals. They’re designed to be part of the same workflow.

How Lighthouse Performance Score Is Calculated:

Lighthouse’s performance score comprises seven metrics, each contributing a percentage of the total performance score. The metrics are Largest Contentful Paint (LCP), Total Blocking Time (TBT), Cumulative Layout Shift (CLS), First Contentful Paint (FCP), Speed Index, Time to Interactive (TTI), and First Meaningful Paint (FMP).

Why Your Lighthouse Score May Vary:

Your score may change each time you test due to browser extensions, internet connection, A/B tests, or even the ads displayed on that page load.

Lighthouse Performance Metrics Explained:

Largest Contentful Paint (LCP):

Measures the page load timeline point when the page’s largest image or text block is visible within the viewport. The goal is to achieve LCP in < 2.5 seconds.

Total Blocking Time (TBT):

Measures the time between First Contentful Paint and Time to Interactive. The goal is to achieve a TBT score of fewer than 300 milliseconds.

First Contentful Paint (FCP):

Marks the time the first text or image is painted (visible). The goal is to achieve FCP in < 2 seconds.

Speed Index:

Measures how quickly the page is visibly populated. The goal is to achieve a score of less than 4,000 milliseconds.

Time to Interactive (TTI):

Measures how long it takes for the page to become interactive. The goal is to achieve TTI in < 5 seconds.

First Meaningful Paint (FMP):

Marks the time at which the primary content of a page is visible. The goal is to achieve FMP in < 2.5 seconds.

Cumulative Layout Shift (CLS):

Measures the visual stability of the page. The goal is to achieve a score of less than 0.1.

How to Test Performance Using Lighthouse:

- Open Google Chrome and navigate to the webpage you want to test.

- Right-click anywhere on the page and select “Inspect” from the dropdown menu.

- Click on the Lighthouse icon in the top menu bar and select “Generate report”.

- Wait for the test to finish and review the results

JavaScript Redirects and SEO: Best Practices and Types for Optimal Performance

JavaScript redirects can be a helpful tool for webmasters, but they can also present challenges, especially when it comes to SEO. In this blog post, we will explore what JavaScript redirects are, when to use them, and the best practices to follow for SEO-friendly JavaScript redirects.

Understanding JavaScript Redirects

JavaScript redirects tell users and search engine crawlers that a page is now available at a new URL. They use JavaScript code to instruct the browser to redirect users to the unique URL. JavaScript redirects are often used to inform users about changes in the URL structure and make it easier for search engines to find your content.

Different Types of Redirects

Several types of redirects include server-side redirects, client-side redirects, meta-refresh redirects, and JavaScript redirects. While server-side redirects are preferred for SEO, JavaScript redirects can be used when no other option is available.

Best Practices for SEO-Friendly JavaScript Redirects

To ensure that your JavaScript redirects are SEO-friendly, it is essential to follow these best practices:

Avoid Redirect Chains:

Redirect chains can slow down user experience, and Google can only process up to three redirects. Aim for no more than one hop and ensure that it’s under five hops.

Avoid Redirect Loops:

Redirect loops remove all access to a specific resource located on a URL. Fixing redirect loops is easy: Remove the redirect causing the chain’s loop and replace it with a 200 OK functioning URL.

Use Server-Side Redirects When Possible:

JavaScript redirects may not be the best SEO solution. If you have access to other redirects, such as server-side redirects, use them instead.

JavaScript redirects can be useful, but web admins should use them cautiously and only as a last resort. By following these best practices, you can ensure that your JavaScript redirects are SEO-friendly and that your website’s user experience is not compromised. Remember, server-side redirects are preferred for SEO, so use JavaScript redirects only when you have no other option available.

Optimizing Largest Contentful Paint (LCP) for Higher Google Rankings: Tips and Strategies

Optimizing Your Website’s Largest Contentful Paint (LCP) for Improved Google Rankings

Understanding the Largest Contentful Paint (LCP)

The Largest Contentful Paint (LCP) is a metric that measures how quickly the main page content appears after opening a webpage. It is the most important site speed metric for improving your search engine rankings and enhancing user experience.

The Importance of LCP for SEO

Google recognizes website speed as a crucial ranking factor, and LCP is one of the three Core Web Vitals metrics that Google uses to assess website performance. If your website’s slow LCP can lower your Google ranking and negatively impact the user experience.

What is a Good LCP Time?

To achieve a “Good” rating, your website’s LCP time should be below 2.5 seconds. If it’s over 4 seconds, it’s rated as “Bad,” which has the most negative impact on your Google ranking. Scores between 2.5 and 4 seconds are rated as “Needs Improvement.”

Measuring Your Website’s LCP

You can easily measure your website’s LCP by running a free website speed test. The results of the speed test will tell you whether your website meets the LCP threshold or if you need to optimize other Core Web Vitals.

Tips for Improving Your Website’s LCP

To optimize your website’s LCP, ensure that the HTML element responsible for the LCP appears quickly. You can do this by identifying the resources necessary to make the LCP element appear and loading those resources faster or not at all. Here are some tips:

- Reduce render-blocking resources

- Prioritize and speed up LCP image requests

- Use modern image formats and size images appropriately

- Minimize third-party scripts

- Reduce server response time

By measuring and optimizing your website’s LCP score, you can improve the user experience and increase your chances of ranking higher in Google. With these tips, you can effectively optimize your website’s LCP and ensure that it loads quickly for your visitors, enhancing their overall experience.

GTmetrix: A Comprehensive Guide to Using the Speed Test Tool

In today’s digital age, website performance is essential for online success. However, with countless tools and applications available, finding the right one that meets your needs can be challenging. Enter GTmetrix, a web-based tool designed to analyze website speed and uncover areas for improvement.

What is GTmetrix?

GTmetrix is a free speed test tool that evaluates a website’s load time, size, and requests and generates a score with recommendations for optimization. It’s a versatile tool that can benefit site owners, engineers, SEO professionals, and others looking to improve their website’s performance.

How to Use GTmetrix:

Using GTmetrix is a breeze – simply plug in your URL and let the tool run its analysis. You’ll receive a report that overviews your website’s performance, including the GTmetrix Grade, Web Vitals, and speed visualizations. You can also review the top issues that are broken down by topics such as First Contentful Paint (FCP), Largest Contentful Paint (LCP), Total Blocking Time (TBT), and Cumulative Layout Shift (CLS).

GTmetrix Tabs:

GTmetrix also offers various tabs that can help you gain more insights into your website’s performance. These include:

- Performance Tab: provides performance-based metrics, including FCP, Speed Index, CLS, and other browser-specific metrics.

- Structure Tab: outlines the tool’s different audits and their impact, and GTmetrix recommends focusing on specific audits found in this tab.

- Waterfall Tab: illustrates a waterfall chart and the details of each action in a waterfall approach, where you can pay attention to resources that take a long time to load.

- Video Tab: allows you to record a page load video to pinpoint different issues with the page.

- History Tab: enables you to view graphs that display changes over time to your page metrics like page sizes, time to interact, and scores.

GTmetrix Measurement:

GTmetrix produces an overall score that considers users’ load time and website architectural design. The performance score can be compared to a Lighthouse Performance Score, and the structure rating combines GTmetrix’s proprietary assessment of its custom audits with the Lighthouse assessment.

Web Vitals:

In addition, GTmetrix highlights metrics that Google uses to determine whether a website provides “a delightful experience.” These include:

- Largest Contentful Paint (LCP): refers to the time it takes for the most significant element on your website page to load where the user can see it.

- Total Blocking Time (TBT): measures your website’s load responsiveness to user input.

- Cumulative Layout Shift (CLS): measures unexpected shifting of page elements while the page is loading.

In conclusion, GTmetrix is an invaluable tool for optimizing and improving your website’s performance. It provides a comprehensive assessment of your site’s well-being and identifies factors that can affect your search engine visibility. Try GTmetrix today, and take your website’s performance to the next level.

Enterprise SEO: Enhancing Website Optimization with Website Intelligence Use Cases

As the digital landscape continues to evolve, website optimization has become increasingly complex, leaving many digital marketers feeling overwhelmed. However, website intelligence has emerged as a powerful tool for identifying hidden issues and discovering new opportunities to drive more traffic and conversions.

What is Website Intelligence?

Website Intelligence involves using data and insights to spot hidden issues and discover new opportunities to improve website performance, resulting in increased traffic and conversions.

Enhance Accessibility for All Users:

With a significant portion of the population living with disabilities, accessible websites are critical for both customer consideration and legal compliance. Website intelligence can help identify accessibility issues and provide recommendations for improvement.

Boost Website Speed by Addressing CSS Performance:

Poorly structured style sheets can negatively impact user experience and search engine rankings, leading to slow-rendering pages. By addressing issues with CSS performance, website speed can be increased, leading to higher user engagement and improved search engine rankings.

Optimize Image Load Times for a Better User Experience:

Images are a critical aspect of a website’s user experience, but they can also slow down a site’s load time if not optimized properly. Website intelligence can help identify image loading speed issues and provide image optimization suggestions.

Ensure Site Security for User Protection:

Site security is critical for protecting user data and preventing malicious attacks. Website intelligence tools can help identify security vulnerabilities and recommend strengthening website security.

Foster Collaboration Between SEO Professionals and Developers:

Collaboration between SEO professionals and developers is essential for successful website optimization. Establishing standards and running pre-production scans can identify and resolve potential issues before they impact website performance.

Combine Marketing and Technical SEO Efforts:

Marketing and technical SEO are closely intertwined and can significantly impact a business’s success. Website intelligence can help identify opportunities for optimizing website health and marketing efforts.

Analyze Customer Reviews for Insights:

Customer reviews provide valuable insights into product and service performance. Website intelligence tools can help analyze customer feedback from multiple sources, providing actionable insights for improving customer satisfaction and overall success.

Key Takeaways:

- Website intelligence is a valuable tool for improving website optimization at an enterprise level.

- By leveraging data and insights to address issues and identify opportunities for improvement, businesses can adapt to the evolving nature of SEO and drive more traffic and conversions.

- Collaboration between SEO professionals, developers, marketing teams like corporate marketers and affiliates is critical for achieving website optimization goals, and website intelligence tools can provide the command center needed to make informed decisions and drive success.

Technical SEO Audits with Chrome Canary: A Step-by-Step Guide.

Chrome Canary is a powerful tool for technical SEO audits, thanks to its experimental features that are not yet available in the stable release. Here are some ways in which Chrome Canary can help with technical SEO audits:

Auditing Performance with Chrome Canary

The Performance panel in Chrome DevTools is a powerful feature that can help identify performance bottlenecks and optimize website performance. It allows you to analyze network activity, CPU usage, JavaScript performance, and more.

Automate Your Page Speed Performance Reporting

Chrome Canary allows you to automate your page speed performance reporting, saving you time when performing technical SEO audits.

Automate Timeline Traces For Synthetic Testing

With Chrome Canary, you can simulate performance testing for your user’s real-world experience. Set up user flow recordings in the Recorder Panel and export the script into a WebPage test.

Web Platform API Testing On Chrome Canary

The Chrome engineering team publishes experimental APIs that developers can use for automated web performance testing. Real User Monitoring (RUM) analytics providers use Chrome’s APIs to track and report real users’ CWVs.

Back/Forward Cache Testing for Faster Page Navigation

Modern browsers have introduced a Back/Forward Cache (bfcache) feature that captures a snapshot of the page in the browser’s memory when you visit it. This cache loads pages faster and without making a new network request to your server, providing a quicker page load experience to users who navigate back to a previously visited page on your site. Chrome 96 Stable release shipped the bfcache test in the Application panel, which checks pages if the Back/Forward caching is being deployed.

Fixing Analytics Underreporting From Bfcache Browser Feature

The bfcache browser optimization is automatic, but it does impact CWV. Analytics tools may underreport pageviews because a page gets loaded from its bfcache. Make sure your analytics are set up to detect when a page gets loaded from bfcache and test your website to make sure your important pages are serving it.

New Update to the Back/Forward Cache Testing API

The new NotRestoredReason API feature improves error reporting for bfcache issues, and it will ship with Stable Chrome 111.

Identifying Render-Blocking Resources with the Performance API

Chrome 107 shipped a new feature for the Performance API that identifies render-blocking resources, such as CSS, that delay the loading of a site. When a browser comes across a stylesheet, it holds the page loading up until it finishes reading the file. A browser needs to understand the layout and design of a page before it can render and paint a website. Devs can help minimize re-calculation, styling, and repainting to prevent website slowdowns.

HTTP Response Status Codes Reporting with the Resource Timing API

Chrome 109 shipped with a new feature for the Performance API that captures HTTP response codes, thereby enabling better HTTP response status code reporting for the Resource Timing API. This feature enables developers and SEOs to segment their RUM analytics for page visits that result in 4XX and 5XX response codes.

Interaction to Next Paint (INP) Metric

Google launched its experimental Interaction to Next Paint (INP) metric at Google I/O 2022, which captures clicking, tapping, keyboard, and scrolled tabbing activity and also measures the page’s average response time for any interaction that occurs. This metric is designed to capture user interactions beyond the initial page load, which is only measured by First Input Delay (FID). According to Jeremey Wagner, “Chrome usage shows that 90% of a user’s activity happens after the initial page load.” Google continues experimenting and refining INP, and INP-optimized sites will have a competitive advantage when Google evolves past FID.

Final Words

Mastering advanced technical SEO techniques is essential for experienced SEO specialists who want to stay ahead of the competition and achieve higher rankings in search engine results pages. Optimizing website architecture, improving website speed and security, and implementing other advanced techniques can give your website a competitive edge and attract more organic traffic.

Remember that technical SEO is an ongoing process requiring continuous learning and adaptation to stay up-to-date with industry trends and algorithm updates.

So, continue to explore new techniques and best practices, test and measure your results, and make data-driven decisions to improve your website’s performance. We can assure with the knowledge and skills gained from this guide; you’ll be well on your way to becoming an expert in technical SEO and achieving your website’s full potential.